What Makes a Career Assessment Credible? A Practical Buyer’s Checklist

Most career assessments are easier to market than to evaluate.

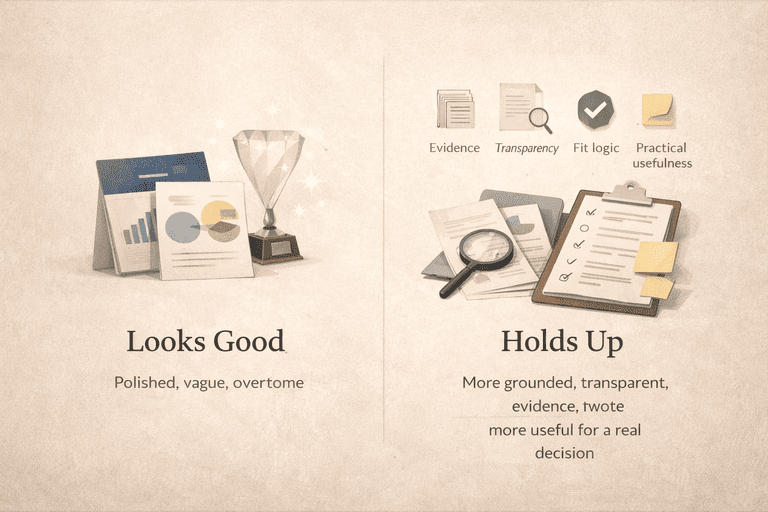

The page looks polished. The quiz feels psychologically interesting. The results sound flattering. Maybe the product even uses words like "science," "AI," "psychometrics," or "proprietary model."

None of that is enough.

If a career assessment is going to influence a real decision about work, money, training, or direction, the standard should be higher than "the site felt convincing." A credible assessment should make it easier to understand what it measures, what it does not measure, what the output is actually good for, and how much trust the buyer should place in the result.

The Short Answer

A credible career assessment should do at least eight things well:

1. clearly explain what it measures 2. use a model that matches the decision it claims to support 3. show enough evidence or methodological transparency to justify trust 4. measure enough of the right constructs for the job it claims to do 5. make clear what the output is actually for 6. relate the output to the user's real situation rather than only abstract self-description 7. state its limitations honestly 8. show enough of its logic and disclosure posture that a careful buyer can tell what kind of trust it has actually earned

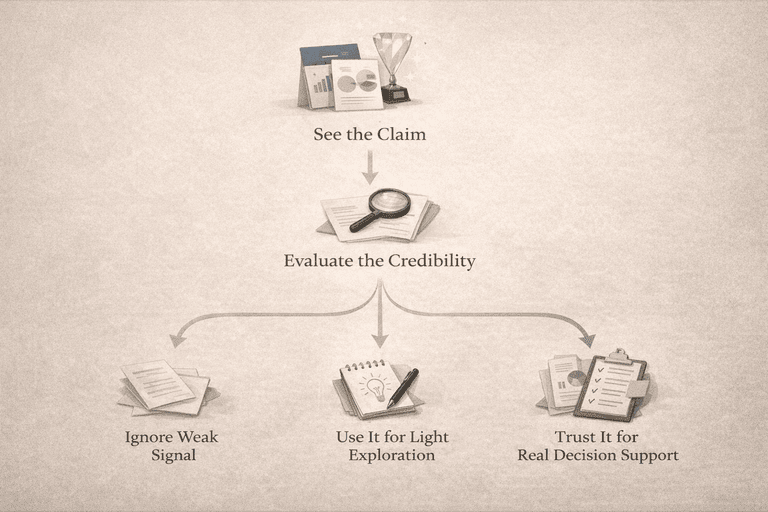

If a tool cannot do those things, it may still be entertaining or somewhat reflective. It just should not be treated like a serious decision aid.

Adults often use career assessments under real pressure: to evaluate a current role, to decide whether to change jobs, to think about retraining, or to assess whether a bigger shift is justified.[[1]](#ref-1) So the real question is: what kind of trust has this assessment actually earned before it gets influence over a real decision?

Why This Category Tricks Otherwise Careful Buyers

Career assessments sit in an unusual category. They borrow credibility from multiple directions:

- psychology language

- data language

- personality language

- career guidance language

- product-design polish

That combination can make weak tools look stronger than they are. A result can feel personal and accurate without being strong career guidance. A recommendation can feel plausible without being meaningfully diagnostic.

This is one reason buyer discipline matters so much here. You are not only evaluating whether the test is interesting. You are evaluating whether the system behind it deserves influence over a real decision.

First: What Job Is The Assessment Actually Built To Do?

This is the first question almost nobody asks directly.

Before you evaluate whether a tool is credible, you have to know what it is even trying to do.

A career assessment may be built mainly for:

- self-reflection

- broad exploration

- interest profiling

- personality description

- current-role diagnosis

- adjacent-career reasoning

- next-step decision support

Those are not the same thing.

This is where many buyers get confused. They take a self-reflection tool and expect diagnosis. Or they use a broad exploration platform and expect a narrow next-step answer. Or they take a personality-driven product and expect it to tell them whether their dissatisfaction is burnout, bad management, or deep mismatch.

That mismatch between tool and problem is one of the biggest reasons people feel disappointed even when the assessment is functioning as designed.

So the first credibility question is simple:

Does the model match the problem the product claims to solve?

If not, everything downstream gets weaker.

The Audit

Here is the practical audit I would use before treating a career assessment like a serious decision aid.

1. Model Clarity

A credible assessment should tell you, in plain language, what it measures.

Not vague language. Not only marketing language. The actual model.

Examples of acceptable clarity:

- interests

- motivations

- personality traits

- work-behavior strengths

- values

- work environment preferences

If the assessment does not clearly explain the main dimensions behind the result, that is a red flag. The buyer should not have to guess whether the system is driven by personality typing, vocational interests, trait scales, observed behavior patterns, or a generic recommendation engine.

Model clarity matters because a tool that hides the model also makes it much harder to judge the output responsibly.

2. Construct Coverage

This is the next big question.

A credible system should not ask one thin construct to do the work of a whole career decision.

For example:

- personality is useful, but not enough on its own for career fit[[2]](#ref-2)

- vocational interests are useful, but they are not the same thing as motivation or actual work constraints[[3]](#ref-3)

- broad self-reflection can be helpful, but it is not automatically a diagnostic model

This is one reason current research on personality and vocational interests matters. The domains are connected, but distinct.[[3]](#ref-3) So a system that treats one personality-style result as a complete career-fit engine is asking too much from too little.

The buyer question is:

Does the tool measure enough of the right things to support the kind of conclusion it is making?

3. Evidence Quality

This is where many products become thin.

The assessment should not only say it is "backed by science." It should give you some way to inspect what that means.

Useful signals include:

- named frameworks or constructs

- technical documentation or methodology notes

- evidence of reliability or internal consistency

- evidence that the output meaningfully connects to outcomes the product cares about

- transparent explanation of where the data comes from

Not every user will read a technical manual. That is fine. Credibility does not require every buyer to audit the evidence personally. It does require the evidence to exist in a form that can be inspected.

If a product says "AI-powered" or "scientifically proven" without making the model or evidence more legible, that is weak.

Professional testing standards reinforce the same expectation. The AERA/APA/NCME Standards for Educational and Psychological Testing put validity, reliability, fairness, documentation, and appropriate interpretation at the center of responsible test use, and they explicitly tie test selection and interpretation to the intended use of scores rather than to marketing language.[[7]](#ref-7)

A tool does not become credible because it has a polished interface and a psychologically plausible story. It becomes more credible when the evidence, documentation, and intended use line up.

4. Output Usefulness

A result can feel smart and still be low-utility.

This is the gap between descriptive accuracy and decision usefulness.

A useful output should help a person do something better, not only feel seen. Depending on the product category, that may mean:

- narrowing realistic options

- understanding why a current role feels wrong

- separating fixable friction from structural mismatch

- exploring adjacent options more intelligently

- seeing tradeoffs more clearly

If the output is only:

- a flattering label

- a personality story

- a long list of loosely related careers

then the tool may be interesting, but not strong enough for a harder decision.

This is also where career-specific professional standards matter. NCDA's assessment and evaluation competencies are built around the idea that career assessment use requires more than simply delivering a result. It requires understanding the purpose of the assessment, interpreting results appropriately, and using them in a way that actually supports career decision-making rather than replacing it.[[8]](#ref-8)

5. Current-Role Relevance

This matters especially for adults.

A credible assessment for adult career decisions should usually have some way to relate the result back to the user's current role or current situation. OECD guidance on adult career support keeps pointing toward this more practical use case: adults seek guidance to progress, change jobs, respond to labor-market shifts, and make better decisions from where they already are.[[1]](#ref-1)

That means a stronger adult-oriented assessment should help answer questions like:

- what is happening in my current role?

- what part of the mismatch is fixable?

- what should stay in my next move?

- how large does this change actually need to be?

If the tool begins and ends in abstract self-description, it may still be useful. It is just probably weaker for adult decision support.

6. Limitation Honesty

This is one of the best signals of credibility.

A trustworthy system should tell you what it cannot do.

Examples:

- it is not a final verdict

- it does not replace judgment

- it cannot fully predict success from one result

- it is stronger for exploration than diagnosis

- it is stronger for reflection than for action planning

When a product acts like one assessment can resolve all of career fit, all of motivation, all of skill transfer, and all of next-step planning in one pass, that is usually a warning sign.

Credible tools usually become more trustworthy when they are willing to narrow their own claims.

7. Transparency About Data And Matching Logic

The buyer does not need every weight, threshold, or algorithm disclosed.

But they should have enough visibility to understand the rough pathway from:

- responses

- to interpretation

- to recommendation

This is especially important for tools that map users against career databases, fit systems, or recommendation engines. If the path from input to result is a total black box, buyers have to trust far more than they can inspect.

Some black-box boundary is normal. Total opacity is not ideal.

8. Practical Fit With The Buying Situation

This final criterion is less scientific and more practical, but it matters. A credible assessment is not only internally respectable. It is also appropriate for the buyer's actual situation.

Examples:

- a free interest profiler may be very credible for early exploration

- a broad exploration platform may be credible for visibility and discovery

- a framework-led personality tool may be credible for self-reflection

- a more diagnostic system may be more credible for current-role decisions and adjacent-move reasoning

Credibility is partly about the tool itself and partly about whether the buyer is using it for the right job.

Red Flags To Watch For

Some warning signs are simple.

Be careful if the assessment:

- refuses to explain what it measures

- implies one result explains everything

- makes grand predictive claims with no visible evidence

- uses science language without naming the model

- gives very broad recommendations with no decision logic

- has no visible limitations section or caution language

- feels more like identity entertainment than career guidance

None of those automatically make a product worthless. But together they usually mean the buyer should lower the amount of trust they place in the output.

More concrete examples of bad assessment behavior:

- a test that recommends careers but never explains what constructs drive the match

- a result page that gives a score but no interpretation of what the score should and should not mean

- a platform that claims scientific credibility but has no methodology, no technical note, and no named model

- a tool that treats one personality-style profile as enough to justify a major career move

- a product that never distinguishes exploration use from diagnosis use

- a paid assessment that hides what the output looks like until after purchase, making it hard to judge whether the result is reflective, diagnostic, or simply decorative

- a recommendation engine that gives a long occupation list but no explanation for why some options rose to the top and others did not

- a tool that talks about precision while refusing to say how recent the underlying occupational or matching data is

- a result page that sounds definitive even though the underlying input was a brief self-report with obvious construct gaps

Those are the kinds of behaviors that make a product feel persuasive without earning proportional trust.

Before You Pay, Pressure-Test The Product

If I were evaluating a paid career assessment, I would do this before spending money:

1. identify the actual decision I need help with 2. check whether the model fits that decision 3. inspect whether the product explains its constructs clearly 4. look for methodology, technical notes, or evidence signals 5. look for a sample report or enough output preview to judge whether the product produces interpretation or only presentation 6. see whether the output seems built for action or only reflection 7. look for explicit limitations 8. ask whether the product helps with my real situation or only with generic exploration

That eighth step matters more than buyers usually expect. A person deciding whether to leave a draining but well-paid role, retrain for a new field, or make an adjacent move needs a different standard than a student who mainly wants broad exposure to options.

That is a much better buying process than:

- "the page looked polished"

- "the result felt true"

- "the recommendation list seemed interesting"

Those things can still matter. They just should not carry the whole trust decision.

What Serious Products Usually Make Visible Before Purchase

Not every credible assessment discloses the same amount. But strong products usually make at least some of these things visible:

- what constructs are measured

- what kind of decision the assessment is designed to support

- how long the assessment takes

- what the output is meant to help with

- what kind of evidence supports the model

- whether the product offers sample output, methodology notes, or a technical explanation

- how recommendations are formed at a high level

- what data sources or occupational frameworks sit behind the recommendation layer

- what the result should not be used for

- enough methodology that a thoughtful buyer can tell the difference between a serious system and a black box with polished copy

That last point matters more than people think. A buyer does not need full algorithm disclosure. They do need enough public documentation to distinguish "this tool has real assessment logic behind it" from "this tool mainly has confident wording."

The strongest products also disclose these things in the right order. They make it easier to answer three practical questions before purchase:

1. what is this tool actually good for? 2. what kind of output will I receive? 3. what should I not expect it to do?

That is a meaningful trust signal because it lowers the amount of guesswork the buyer has to do before giving the product influence over an important decision.

What Even A Credible Assessment Still Cannot Promise

Even a credible assessment does not remove the need for judgment.

It does not guarantee:

- that the top recommendation is the right next role

- that a current-role problem is purely a fit problem rather than a team, manager, or compensation problem

- that a strong result today will still feel right after life constraints change

- that one assessment pass can replace conversations, experiments, or labor-market reality

That is not a weakness in the category. It is simply the boundary of what assessment can do. Good tools narrow the field, improve interpretation, and reduce avoidable mistakes. They do not turn a complex career decision into a single-click verdict.

Where CareerMeasure Fits In This Checklist

This checklist is also the standard I would apply to us.

CareerMeasure's argument is not that every other tool is worthless. The argument is that many tools are too thin for adult decision support because they emphasize description more than interpretation.

The design goal here is different:

- interests, motivations, and strengths instead of one thin self-description layer

- current-role interpretation before only abstract matching

- gap logic that distinguishes smaller issues from deeper mismatch

- adjacent-career reasoning instead of only idealized recommendations

- public methodology and credits so the system is not purely a black box

That is the category problem we are trying to solve. Whether we solve it well is something buyers should still judge critically. But the checklist points toward the same product philosophy: stronger career assessments should earn trust through clarity, scope discipline, and decision usefulness, not just personality language and polish.

Final Answer

A credible career assessment is not just interesting. It is legible, appropriately scoped, evidence-backed enough to justify trust, and honest about what it can and cannot do.

It should tell you what it measures, cover enough of the right constructs for the job it claims to do, connect the output to a real decision, and avoid pretending one layer of self-description is the whole answer.

If a career assessment cannot meet that standard, treat it as a reflection tool or an exploration aid, not a serious decision engine.

References

[1] OECD. Career Guidance for Adults in a Changing World of Work. https://www.oecd.org/en/publications/career-guidance-for-adults-in-a-changing-world-of-work_9a94bfad-en.html

[2] Judge, T. A., & Zapata, C. P. (2015). The person-situation debate revisited: Effect of situation strength and trait activation on the validity of the Big Five personality traits in predicting job performance. Academy of Management Journal, 58(4), 1149-1179. https://journals.aom.org/doi/abs/10.5465/amj.2010.0837

[3] Wille, B., & De Fruyt, F. (2025). Personality and vocational interests: Connections between two fundamental individual-differences construct domains. Current Opinion in Psychology, 66, 102103. https://www.sciencedirect.com/science/article/pii/S2352250X25001162

[4] ONET Resource Center. ONET Interest Profiler Services. https://services.onetcenter.org/ip

[5] ONET Resource Center. ONET Web Services and Data Documentation. https://www.onetcenter.org/services.html

[6] National Career Development Association. Use of Assessment Results. https://www.ncda.org/aws/NCDA/asset_manager/get_file/3395?ver=3403

[7] American Educational Research Association, American Psychological Association, & National Council on Measurement in Education. Standards for Educational and Psychological Testing (2014). https://www.testingstandards.net/uploads/7/6/6/4/76643089/9780935302356.pdf

[8] National Career Development Association. Career Counselor Assessment and Evaluation Competencies (2010). https://www.ncda.org/aws/NCDA/asset_manager/get_file/18143/aace-ncda_assmt_eval_competencies?ver=47302

Get A Clearer Career Signal

Take the assessment to see what fits, what feels off, and which roles are more likely to suit you.

Community Discussion

Share your thoughts about this article

Delete Comment?

Are you sure you want to delete this comment? This action cannot be undone.